silversurfer

Level 85

Thread author

Verified

Honorary Member

Top Poster

Content Creator

Malware Hunter

Well-known

- Aug 17, 2014

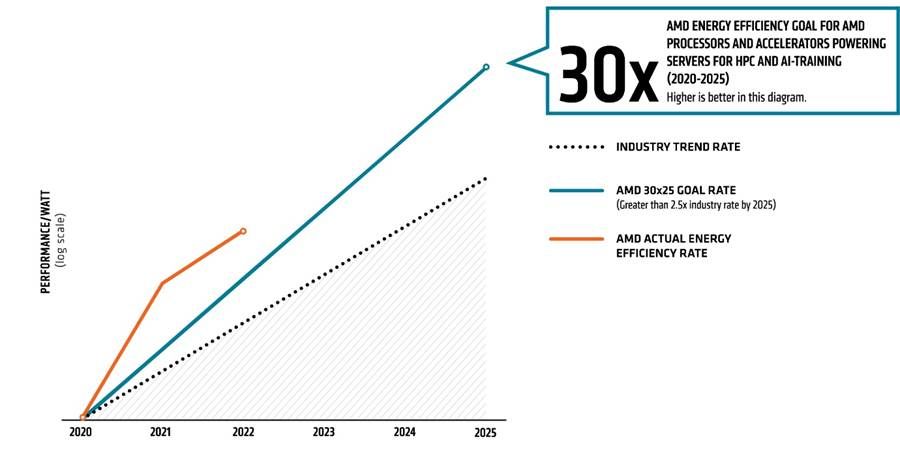

- 10,176

The amount of data generated by people and machines has increased exponentially and this requires a steady increase of datacenter compute performance. To meet the requirements of next-generation datacenters, AMD last year set itself a goal to increase efficiency of its accelerated datacenter platforms used for artificial intelligence (AI) and high-performance computing (HPC) workloads by 30 times by 2025 when compared to its 2020 platforms.

This week the company shared its progress, and it appears that AMD is advancing quite well. The energy efficiency of its accelerated platform for AI and HPC — which includes EPYC processors and Instinct compute GPUs — has improved by 6.79 times from the 2020 baseline.

For those who do not remember, AMD's baseline machine for its 30x25 comparisons is a server based on its EPYC 7742 CPU (64C/128T, 2.25GHz – 3.40GHz, 256MB, 225W) and four Instinct MI50 compute GPUs (5th Gen GCN, 3840 stream processors at 1450 MHz – 1725 MHz, 300W). This machine produced 5.26 TFLOPS per MI50 on 4k matrix DGEMM with trigonometric data initialization, and 21.6 TFLOPS of FP16 on 4k matrices while consuming 1582W.

(Image credit: AMD)

AMD: On Track to Improve Accelerated Datacenter Efficiency by 30x by 2025

Efficiency of AMD's CPUs and GPUs in AI and HPC applications increasing.