Andy Ful

From Hard_Configurator Tools

Thread author

Verified

Honorary Member

Top Poster

Developer

Well-known

- Dec 23, 2014

- 8,129

Te latest AV-Comparatives Real-World Protection Test (Jul-Aug 2019):

Test chart:

https://www.av-comparatives.org/com...chart_month=Jul-Aug&chart_sort=1&chart_zoom=2

Test document in PDF:

Real-World Protection Test Jul-Aug 2019 – Factsheet | AV-Comparatives

***********************************************************************************

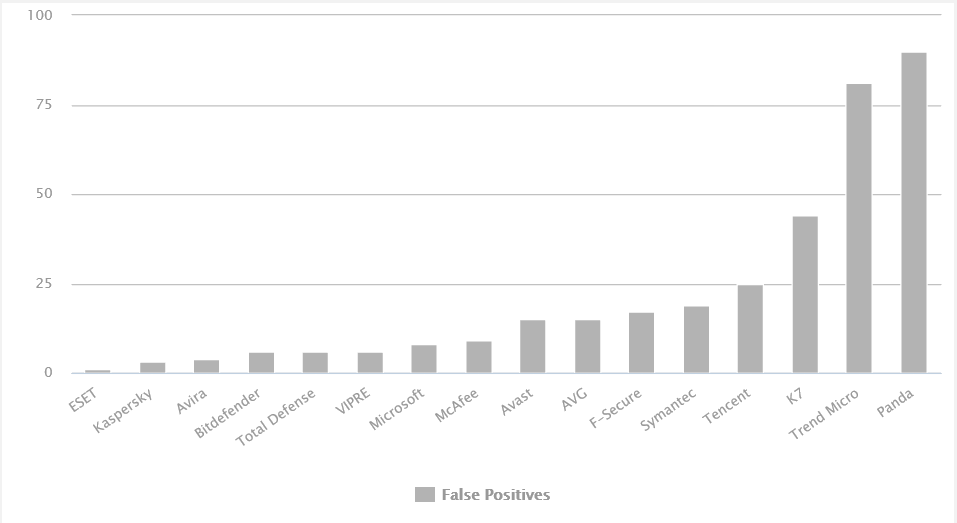

False positives

The false-alarm test in the Whole-Product Dynamic “Real-World” Protection Test consists of two parts: wrongly blocked domains (while browsing) and wrongly blocked files (while downloading/installing).

https://www.av-comparatives.org/real-world-protection-test-methodology/

***********************************************************************************

From AV-Comparatives Real-World test, one cannot conclude how many false positives can produce the AV engine while downloading/executing the files.

This is tested separately for AV-Comparatives Malware Protection tests in False Alarm tests, for example: False Alarm Test March 2019 | AV-Comparatives

Current Malware Protection test and False Alarm test will be probably published soon.

Test chart:

https://www.av-comparatives.org/com...chart_month=Jul-Aug&chart_sort=1&chart_zoom=2

Test document in PDF:

Real-World Protection Test Jul-Aug 2019 – Factsheet | AV-Comparatives

***********************************************************************************

False positives

The false-alarm test in the Whole-Product Dynamic “Real-World” Protection Test consists of two parts: wrongly blocked domains (while browsing) and wrongly blocked files (while downloading/installing).

https://www.av-comparatives.org/real-world-protection-test-methodology/

***********************************************************************************

From AV-Comparatives Real-World test, one cannot conclude how many false positives can produce the AV engine while downloading/executing the files.

This is tested separately for AV-Comparatives Malware Protection tests in False Alarm tests, for example: False Alarm Test March 2019 | AV-Comparatives

Current Malware Protection test and False Alarm test will be probably published soon.

Last edited: