- Jun 5, 2017

- 50

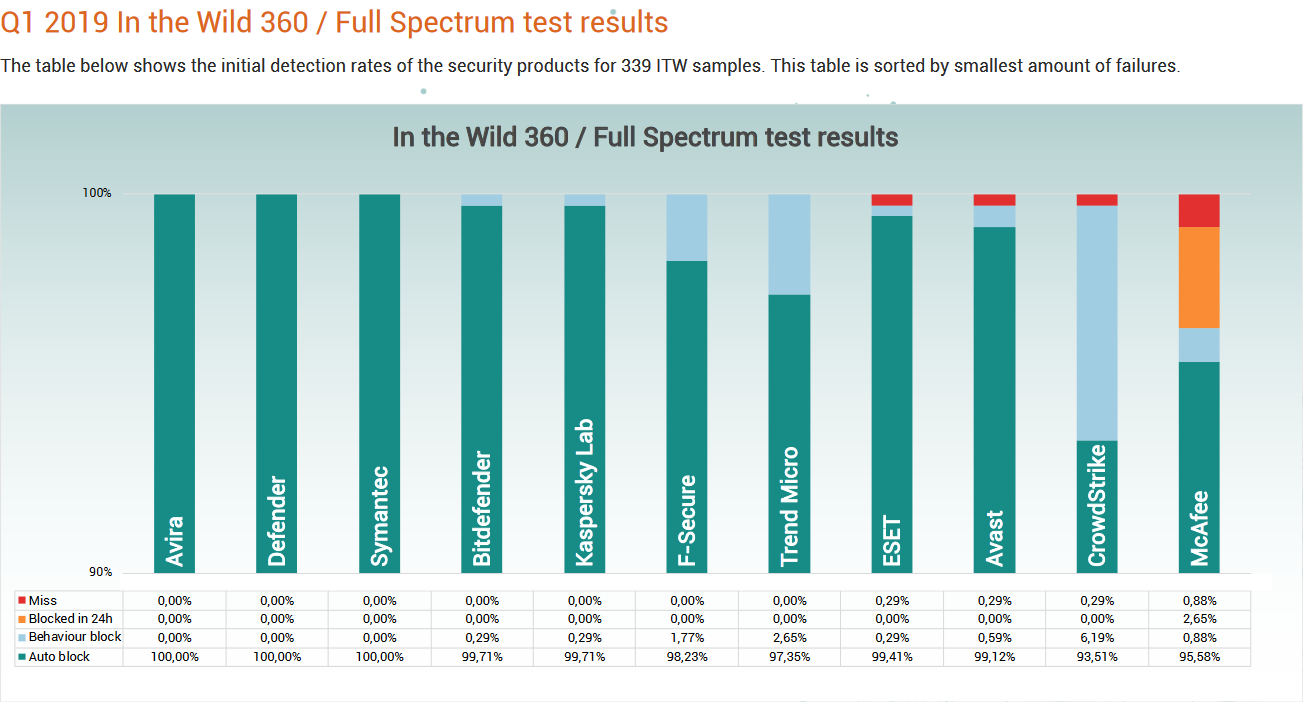

MRG Effitas's Q1 2019 360 Assessment and Certification has been released on May 20, 2019. Eleven products were tested on 339 in-the-wild samples on a Windows 10/7 x64 test bench with Chrome.

Products Tested:

Additional tests (ransomware, financial malware, PUA/Adware, Exploit/Fileless, FP, and Performance) as well as the methodology can be found in the full report.

Products Tested:

- Avast Business Antivirus 19.3.2554

- Avira Antivirus Pro -Business edition 15.0.45.1184

- BitdefenderEndpoint Protection Elite 6.6.10.146

- CrowdStrike FalconProtect 4.26.8904.0

- Microsoft Windows DefenderEngine: 4.18.904.1

- ESET Endpoint Protection 7.0.2091.0

- F-Secure Computer Protection Premium 19.2

- Kaspersky Small Office Security 19.0.0.1088 (d)

- McAfee Endpoint Security 10.6.0.542

- Symantec Endpoint Protection 22.17.0.183

- Trend MicroWorry-Free Business Security 20.0.1049

Additional tests (ransomware, financial malware, PUA/Adware, Exploit/Fileless, FP, and Performance) as well as the methodology can be found in the full report.