A man sits quietly on his porch, a pistol in his hand, pumpkins glowing beside him. Neighbors walk by, the mailman steps over his feet, and teenagers take selfies — thinking it’s just another Halloween prop. Days later, the smell gives him away.

That’s the viral story of Matteo Zayid, the 75-year-old from Marina Del Rey who “died on his own porch and no one noticed.” It’s tragic, cinematic… and completely fake.

The tale of Matteo Zayid never happened. It’s another AI-generated hoax — built from synthetic images, machine-written scripts, and emotional manipulation designed to go viral. Here’s how this eerie fiction fooled millions.

Overview: How the “Halloween Prop” Story Went Viral

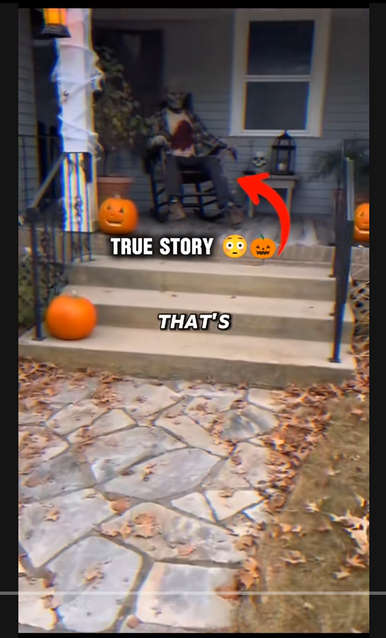

The “Matteo Zayid” video first appeared as a voice-over monologue paired with photorealistic AI imagery: a suburban house at dusk, plastic pumpkins on the steps, a figure in a rocking chair, soft cinematic music, and a narrator delivering the line:

“Did you know about the man who died on his own front porch and was left there for days because everyone thought he was a Halloween prop?”

Within days, dozens of reposts appeared under titles like

“The Saddest Halloween Story Ever Told,”

“When Real Life Looks Too Real,” and

“Matteo Zayid: The Man the World Ignored.”

Each copy used slightly different footage—different houses, different chairs—but the same script, the same melancholy tone, and the same false setting. Comment sections filled with shock and sorrow:

“How could people not notice?”

“This broke my heart.”

“The world is so cruel.”

No one paused to check the facts, because the story felt emotionally true.

That’s the genius—and danger—of AI slop content: believable lies engineered for maximum empathy and shareability.

The Real-World Myth It Mimics

To make the fiction sound authentic, the hoax borrows loosely from real incidents where people’s deaths were mistaken for Halloween displays. In 2014, for example, police in Chillicothe, Ohio found a woman who had died by suicide and was initially assumed to be a decoration by passing motorists. News outlets covered that tragedy with sensitivity.

The creators of the Matteo Zayid narrative copied that template but fabricated every detail:

- Name: No public records, obituaries, or census listings for “Matteo Zayid.”

- Date: “Late October 2009” cited in videos, yet no archived news from that time mentions any comparable event in California.

- Location: “Marina Del Rey” chosen likely because it sounds cinematic and coastal, evoking contrast between sunshine and death.

- Quotes: “Mailman stepped over the blood,” “neighbors admired it”—no witnesses, no police statements, no publications corroborate any of it.

The story’s power lies not in evidence but in atmosphere.

Everything—lighting, narration, pacing—is tuned to feel like a tragic short film. Viewers are moved first, then tricked into believing.

The Anatomy of an AI Hoax: Matteo Zayid Step-by-Step

1. AI Script Generation

The script fits perfectly into AI storytelling templates: tragic setup, ironic twist, moral reflection.

Prompts like “write a haunting short true story about loneliness mistaken for art” generate text almost identical to the Matteo Zayid monologue. The repetition of sensory lines—“pumpkin decorations,” “rocking chair,” “smell so strong joggers crossed the street”—shows linguistic patterns common in AI composition.

2. Voice Synthesis

The narrator’s tone in these clips—calm, low, slightly rasped—comes from AI text-to-speech engines such as ElevenLabs or PlayHT. You can even hear subtle pacing errors: breaths cut mid-sentence, unnatural pauses, and identical intonation across unrelated videos.

3. AI Imagery

The visuals (porches, street lamps, pumpkins) display unmistakable generative traits:

- Overly glossy lighting and lens flares.

- Inconsistent hand or railing geometry.

- Unrealistic depth of field and object blending.

Such cues reveal tools like Runway Gen-2, Pika Labs, or Midjourney were used to produce photorealistic stills later animated into motion clips.

4. Editing & Distribution

Creators compile the narration and imagery into one-minute clips optimized for short-form platforms. They add eerie music, cinematic subtitles, and a hook line in the first five seconds: “You won’t believe this true story.”

Once uploaded, the algorithm rewards engagement, and copycats repost the same clip under new captions to farm additional views.

5. Engagement Farming Cycle

Each re-upload generates comments, which feed more recommendations. Accounts quickly reach tens of thousands of followers, after which they can pivot to promote unrelated products, investment scams, or AI storytelling channels.

Thus, the Matteo Zayid hoax is not about awareness or art—it’s digital bait for monetization.

Why People Fell for It

Emotional Plausibility

Loneliness, neglect, and societal apathy are universal fears. The idea that someone could die unnoticed fits into real social anxieties, making the lie believable.

Seasonal Context

Because the video circulates every October, it benefits from Halloween priming: viewers already expect strange or dark stories. “Man mistaken for decoration” feels plausible amid seasonal news of elaborate horror displays.

Visual Authenticity

Modern generative models produce images that look like DSLR footage. The lighting, reflections, and facial textures pass the “scroll test”: at phone-screen resolution, they seem entirely real.

Algorithmic Reinforcement

Once users watch similar spooky shorts, the platform feeds them more “real horror” stories, creating an echo chamber where fabricated tragedies blend with authentic news segments.

Dissecting the Script: How We Know It’s Syntheti

A close textual analysis reveals repeated AI stylistic fingerprints:

| Phrase Type | Example | Pattern |

|---|---|---|

| Over-dramatic metaphor | “The illusion was perfect. Too perfect.” | Repetition for emphasis common in LLM output |

| Sensory escalation | “Then November arrived. The smell, so strong joggers crossed the street.” | Abrupt temporal leap + visceral imagery |

| Detached narration | “The mailman stepped over the blood to deliver letters.” | Implausible witness detail without attribution |

| Moral conclusion | “He was a man screaming for the world’s attention, even in death.” | Signature AI closure line ending on emotion rather than fact |

These devices appear in countless AI horror monologues and “digital campfire” stories published in 2024-2025.

The Broader Pattern: AI Slop Horror

The Matteo Zayid hoax sits within a wider genre of synthetic morality tales—fabricated “true events” designed to feel like news features.

Other examples include:

- The Librarian Who Turned to Stone (AI fiction about a woman petrified mid-scream).

- The Diver Who Glowed Blue (fake ocean-mutation clip).

- The Photographer Who Vanished in a Flash (AI-generated disappearance story).

Each follows the same production chain: generated script → AI visuals → synthetic voice → viral upload.

Together they flood feeds with emotive noise, blurring truth and fiction for profit.

Ethical and Social Impact

1. Erosion of Empathy Through Manipulation

When audiences invest emotion into a false story, they experience real grief and outrage—but for nothing. This desensitizes genuine compassion for true victims.

2. Misinformation Fatigue

As fabricated tragedies spread, users grow skeptical even of legitimate news, weakening trust in real journalism and emergency reporting.

3. Platform Responsibility

Algorithms reward watch time, not truth. Unless platforms implement content authenticity checks or visible AI-use labels, such hoaxes will continue to dominate “For You” feeds.

4. Psychological Toll

AI horror blends reality and imagination so seamlessly that vulnerable viewers—especially teens—can experience anxiety, guilt, or existential fear from events that never happened.

Fact-Checking the Fiction

A quick verification dismantles the story entirely:

- No death reports from Marina Del Rey in October 2009 matching the name or description.

- No obituary for any “Matteo Zayid” worldwide.

- No archived news using quoted lines or phrases from the viral narration.

- Reverse image search of screenshots leads only to other AI videos, not photography databases.

- Forensic audio inspection reveals identical waveform signatures to other AI-narrated viral shorts.

In short: every measurable element points to a fully synthetic origin.

Lessons in Media Literacy

To avoid falling for future “Matteo Zayid”-style hoaxes:

- Verify sources before sharing emotionally charged clips.

- Search reputable news outlets; genuine tragedies are documented.

- Use AI-detection tools (Hugging Face AI Detect, Sensity AI) to analyze suspicious visuals.

- Report mislabeled content presented as factual when it’s entertainment.

- Educate peers and students on algorithmic manipulation and digital folklore.

The Narrative Mechanics of Viral Sadness

AI storytellers exploit a structure proven to trigger engagement:

- Hook line: a shocking, empathy-baiting question.

- Tragic backstory: isolation, loss, or societal neglect.

- Irony twist: death mistaken for art, beauty born from pain, etc.

- Moral statement: commentary on human indifference.

It feels profound—but it’s mathematical. Each emotional beat is algorithmically optimized to maximize retention time. Matteo Zayid’s tale isn’t literature; it’s predictive modeling dressed as pathos.

Why “Matteo Zayid” Sounds Real: Cognitive Bias at Work

- Contextual bias: Viewers associate porches and pumpkins with Halloween props, just as the narration says.

- Authority bias: A calm documentary-style voice implies journalistic credibility.

- Emotional anchoring: The mention of age, loss, and isolation triggers empathy shortcuts.

- Availability heuristic: Similar real cases make the fake one feel statistically plausible.

Understanding these biases helps audiences resist manipulation.

The Business Model Behind AI Slop

Every viral hoax has a monetization pipeline:

- Gain followers via shocking “true stories.”

- Rebrand the account into “mystery facts,” “AI horror,” or “did-you-know” compilations.

- Sell shout-outs, merch, or redirect traffic to external links.

- Repeat under new page names once credibility drops.

Because AI production costs almost nothing, creators can generate hundreds of such videos weekly, saturating feeds faster than platforms can moderate them.

Could Something Like This Ever Happen for Real?

Tragically, yes—real cases exist where victims were mistaken for decorations or art installations. But the specific details of Matteo Zayid are fabricated.

When genuine events occur, they are quickly covered by local authorities and verifiable reporters. The difference lies in documentation.

AI hoaxes offer emotion without evidence; real news offers evidence even when emotion is restrained.

Frequently Asked Questions (FAQ)

Who is Matteo Zayid?

Matteo Zayid is a fictional character invented through artificial intelligence storytelling. Viral short videos claim he was a 75-year-old man from Marina Del Rey, California, who took his own life on his front porch and was mistaken for a Halloween prop for several days. In reality, there are no public records, police reports, or news articles confirming that anyone named Matteo Zayid ever existed or died under those circumstances. The story was written and visualized by AI tools to appear authentic.

Is the “Halloween prop” story real?

No. The story is entirely fabricated. Every detail—from the location and date to the eyewitness quotes—was generated by AI text and video programs. The purpose of the content is to shock viewers and encourage sharing. While a few real cases exist where people were mistaken for Halloween decorations, none match the description of Matteo Zayid in Marina Del Rey. The videos simply blend truth-like details with fiction to make the narrative believable.

Why did so many people believe it?

People believed the story because it felt emotionally and visually real. The narration sounds like a true-crime documentary, the imagery looks cinematic, and the emotions—loneliness, neglect, guilt—are universally relatable. When AI storytelling is combined with realistic voiceovers and seasonal timing around Halloween, viewers suspend disbelief and react emotionally instead of critically verifying the facts.

How was the Matteo Zayid hoax created?

Creators used several layers of artificial intelligence to build the illusion. A text-generation model wrote the script in a documentary style. A voice-synthesis tool produced a calm, human-sounding narrator. Image and video generators such as Runway Gen-2 or Pika Labs rendered the porch, pumpkins, and rocking chair. Finally, editing software stitched everything together with background music and captions before the video was uploaded to short-form platforms for engagement farming.

What is “AI slop”?

“AI slop” is a term used to describe mass-produced, low-effort AI content designed solely for clicks and watch time. It includes fake stories, deepfake imagery, and auto-generated narration that imitate journalism or documentaries without any factual basis. The Matteo Zayid video is a classic example of AI slop: it floods social media feeds, triggers emotional reactions, and rewards the uploader with followers or ad revenue.

Did this event really happen in 2009 in Marina Del Rey?

No. There are no verified police records, news archives, or coroner reports of such an incident in 2009 or any other year in Marina Del Rey. Local newspapers and law-enforcement databases contain zero references to a death matching the story. The “2009” date and “California” location were chosen by AI text generation to lend plausibility but have no factual support.

What makes this kind of AI hoax dangerous?

Even though the story may seem harmless, repeated exposure to convincing AI fabrications erodes public trust. People become emotionally invested in tragedies that never happened, which dulls empathy for real victims. Hoaxes like this also give scammers ready-made audiences—once an account gains followers through a fake story, it can pivot to promoting products, scams, or misinformation campaigns.

How can you tell if a viral story is AI-generated?

Signs include overly dramatic or perfectly structured narration, visuals with inconsistent lighting or anatomy, lack of verifiable sources outside short-video platforms, and generic phrases such as “based on a true story” without citation. A quick reverse-image search or news search can confirm whether a reported event actually exists. If you find nothing from credible outlets, assume the story is synthetic.

Why does AI horror spread so quickly online?

Algorithms reward engagement, not accuracy. Emotional or shocking videos keep viewers watching longer, which signals platforms to recommend them to others. Because AI content can be produced rapidly and cheaply, creators flood feeds with dozens of fabricated “true stories,” each competing for attention. The Matteo Zayid tale went viral because it perfectly fit this formula—short, tragic, and believable.

Are any parts of the Matteo Zayid story true?

None of the details are verifiable. While the scenario resembles real incidents that happened elsewhere, Matteo Zayid himself, his supposed house, and the five-day discovery timeline are pure fiction. Every visual and spoken element originates from AI generation, not from cameras or witnesses.

What should viewers do when they see stories like this?

Pause before sharing or commenting. Search trustworthy news sources for confirmation. Use tools such as Google News, Reuters Fact Check, or Snopes to verify claims. If no legitimate reports appear, label the video as fiction or report it as misleading. Educating friends and family about AI-generated misinformation also helps reduce its reach.

The Bottom Line

The viral Matteo Zayid Halloween Prop story is a product of artificial imagination, not human tragedy.

No man died on a porch in Marina Del Rey in 2009; no neighbors confused a corpse for décor; no photographs exist beyond AI composites.

The video’s creators engineered a perfect storm of sorrow and disbelief to farm attention. Its success demonstrates how AI storytelling now weaponizes empathy for engagement.

When the next “too-perfect true story” appears in your feed—whether about a forgotten man, a golden-skinned rapper, or a miraculous discovery—pause before you share. Ask:

Who published it first? Who verified it? What evidence exists beyond a voice and a vibe?

Because in 2025, the scariest Halloween prop isn’t on anyone’s porch—

it’s the algorithm manufacturing ghosts that never lived.