- Sep 22, 2014

- 1,767

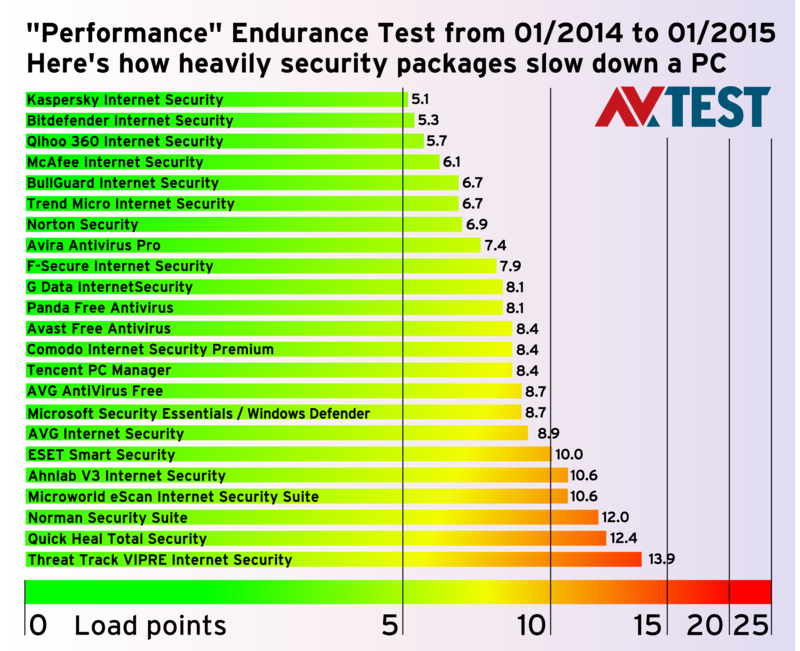

Critics maintain that protection software for Windows really puts the brakes on PCs. In a 14-month, extremely comprehensive performance endurance test, AV-TEST examined the trade-off of performance versus protection, and came up with some conclusive answers.

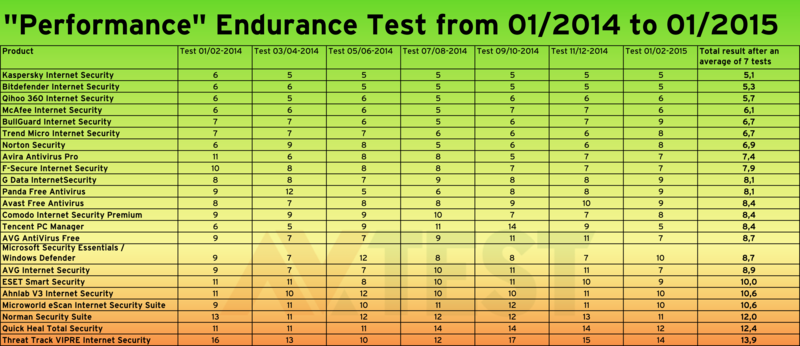

For the reader of an endurance test, the provided rating for speed or performance of a product is only expressed in one single aggregate number. For the tester in the laboratory, it's a long journey to arrive at this number. To get there, he had to accompany a solution through 7 test rounds over 14 months. Of the 19,000 individual ratings per product, 35 test area findings were aggregated. It requires this time and effort to arrive at the rating for a single product. In the current endurance test from January 2014 to the end of February 2015, a total of 23 products were tested for speed in the labs at AV-TEST. In this, the tests alternated in the use of the operating systems Windows XP, 7 and 8.1.

23 products in the Performance Test

Included in the test were products from Ahnlab, Avast, AVG (freeware and purchase product), Avira, Bitdefender, BullGuard, Comodo, ESET, F-Secure, G Data, Kaspersky, McAfee, Microworld, Norman, Norton, Panda, Qihoo 360, Quick Heal, Tencent, Threat Track and Trend Micro. In addition, a Windows system with the freeware Microsoft Security Essentials or Defender (for Windows 8/8.1) was put through all the tests as an additional comparative figure. The reference system without any protection software was used for later comparison of the measured performance ratings. A more in-depth explanation on the topic of reference systems can be found later in the "Test Configuration" section.

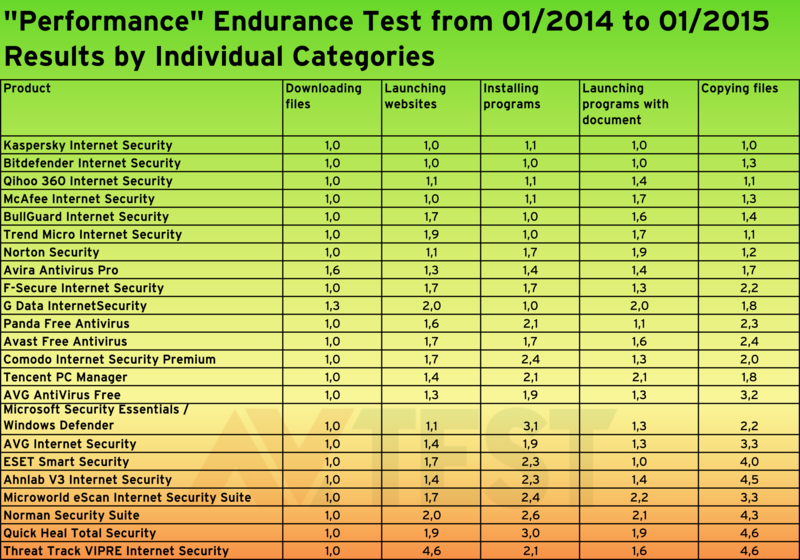

For all products in the tests, it was measured how long they required for various test sets, in order to

- download files from the Internet;

- launch websites;

- install applications;

- open applications, including a file;

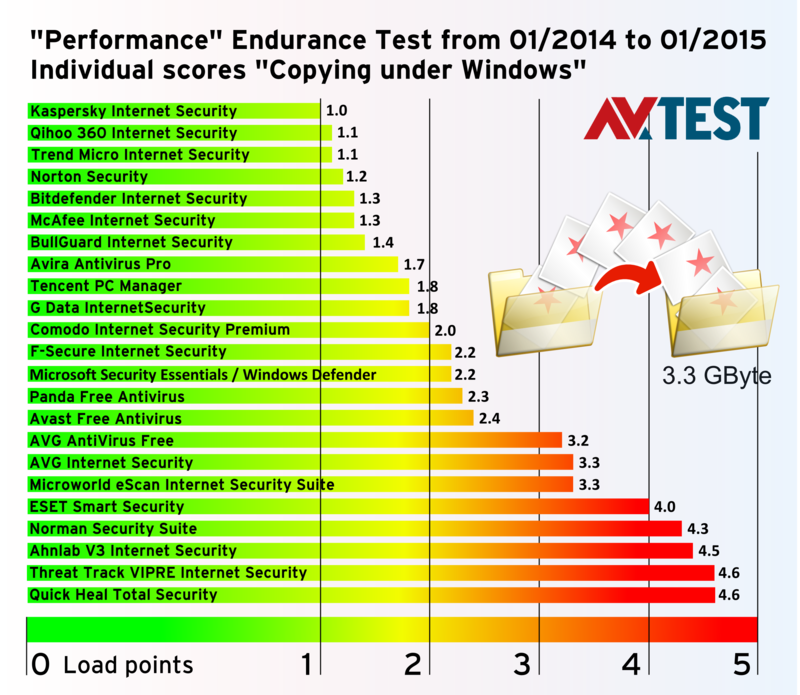

- copy files.

For all subsections, load points of 1 to 5 were assigned after each test, whereby a score of 1 represents good and a score of 5 poor. If an antivirus application slowed down a system between 0 and 20 percent, it received 1 load point. For 21 to 40 percent there were 2 points, for 41 to 60 percent drop in performance, 3 points, and so on up to 5 points.

Thus for all 5 test sections, this resulted in a perfect score of 5 and a worst score of 25 load points. All partial results of the tests were aggregated and at the end divided by the number of 7 test rounds.

Continue...

For the reader of an endurance test, the provided rating for speed or performance of a product is only expressed in one single aggregate number. For the tester in the laboratory, it's a long journey to arrive at this number. To get there, he had to accompany a solution through 7 test rounds over 14 months. Of the 19,000 individual ratings per product, 35 test area findings were aggregated. It requires this time and effort to arrive at the rating for a single product. In the current endurance test from January 2014 to the end of February 2015, a total of 23 products were tested for speed in the labs at AV-TEST. In this, the tests alternated in the use of the operating systems Windows XP, 7 and 8.1.

23 products in the Performance Test

Included in the test were products from Ahnlab, Avast, AVG (freeware and purchase product), Avira, Bitdefender, BullGuard, Comodo, ESET, F-Secure, G Data, Kaspersky, McAfee, Microworld, Norman, Norton, Panda, Qihoo 360, Quick Heal, Tencent, Threat Track and Trend Micro. In addition, a Windows system with the freeware Microsoft Security Essentials or Defender (for Windows 8/8.1) was put through all the tests as an additional comparative figure. The reference system without any protection software was used for later comparison of the measured performance ratings. A more in-depth explanation on the topic of reference systems can be found later in the "Test Configuration" section.

For all products in the tests, it was measured how long they required for various test sets, in order to

- download files from the Internet;

- launch websites;

- install applications;

- open applications, including a file;

- copy files.

For all subsections, load points of 1 to 5 were assigned after each test, whereby a score of 1 represents good and a score of 5 poor. If an antivirus application slowed down a system between 0 and 20 percent, it received 1 load point. For 21 to 40 percent there were 2 points, for 41 to 60 percent drop in performance, 3 points, and so on up to 5 points.

Thus for all 5 test sections, this resulted in a perfect score of 5 and a worst score of 25 load points. All partial results of the tests were aggregated and at the end divided by the number of 7 test rounds.

Continue...