By:

Matthew Eidelberg

PART 1 OF A SERIES.

The primary goal of an attacker is to achieve a specific objective without being detected. This often involves establishing a foothold in an environment and then moving laterally. In a real-life compromise an attacker operates with little to no information about the target’s security controls. As a result, they must adjust their tactics to focus on remaining undetected by an unpredictable and potentially wide spectrum of security controls. Controls mature over time and new technologies have been developed, prompting attackers of all types to adapt and improve their tactics to maximize their likelihood of success.

From the perspective of an attacker, one of the more challenging of these newer technologies is endpoint detection and response (EDR), which is often touted as the future of antivirus (AV). Traditional AV is designed primarily to facilitate prevention and detection of malicious code using a combination of signatures and heuristic analysis. EDR, however, has two primary jobs: detection of malicious behaviors or other signatures and facilitation of analysis and incident response (IR). Because of this, EDR solutions have become a de facto requirement for comprehensive defense against attackers.

EDR is specifically designed to detect suspicious behaviors occurring on the endpoint. These behaviors may include a range of attack techniques, such as process execution or injection and image loads in memory. Once those behaviors have been identified, the second role of EDR comes into play, as the tools are leveraged by defenders and incident responders to take action based on the detection. This triage response may include isolating the compromised host from the network, facilitating the collection of endpoint logs, reviewing the timeline of events, collecting and documenting threat indicators or even allowing for the termination of suspect processes.

Attackers respond to the frequent deployment of these technologies by developing new, highly sophisticated techniques to evade detection on disk and in memory. These techniques extend beyond the traditional initial compromise vectors and are often utilized in post-exploitation techniques to prevent any type of detection throughout the attacker’s operation. The key to these capabilities, wherein the EDR technology makes many decisions in milliseconds, is derived from its ability to hook into all running processes on the host.

This project would not have been possible without the high-quality work from those before us. Many people have publicized ground-breaking research in reviewing EDRs and memory hooks, focusing on ways to circumvent specific products. The intent of this article is to go deeper on the subject, not focusing on techniques that only work on specific products but instead identifying systemic issues across all EDR products and how attackers can utilize them to bypass any EDR product without needing prior knowledge of a client’s security stack. This involves diving deeper on these previously discussed concepts as well as discovering new ones.

If you are interested in learning more, here are a few additional sources:

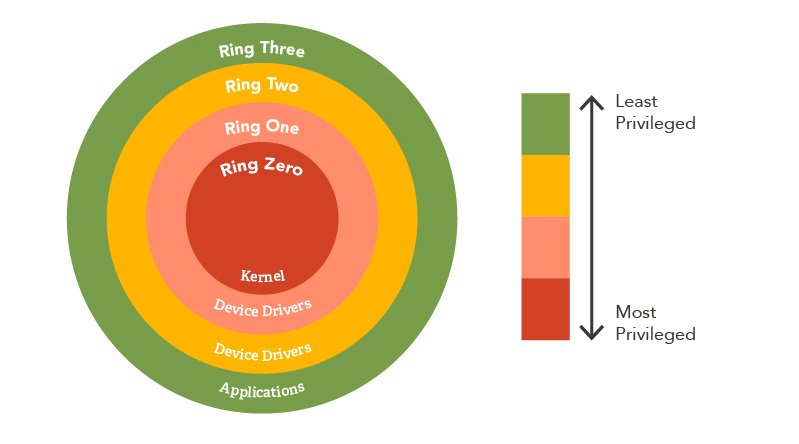

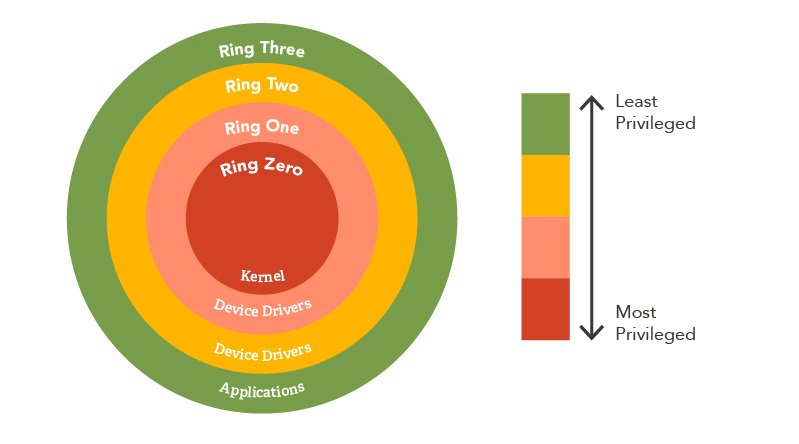

These hooks send the data to the EDR agent running on the endpoint for the telemetry to be processed in real time. The agent is often installed at the kernel level, the highest privileged access. There are two major reasons for this; the first is to avoid being shut down or removed by an attacker, as it isn't easy to gain access to services running in the kernel. To be exploited they typically require some sort of vulnerability or the attacker must already have obtained high-integrity privileges on the endpoint. Since attackers operate with a “black box” mentality, it’s typically assumed that the initial foothold does not already have these privileges and they must be acquired through post-exploitation activity, which may be caught by an EDR if not carefully executed. Another reason these products run in the kernel is that it provides the EDR access to control and monitor the entire system.

Figure 1: Privilege Map

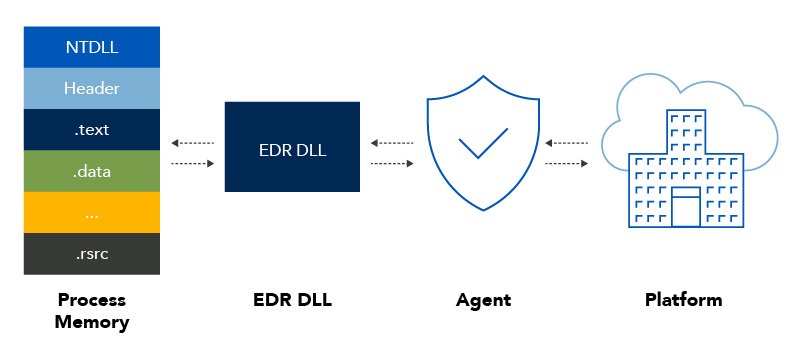

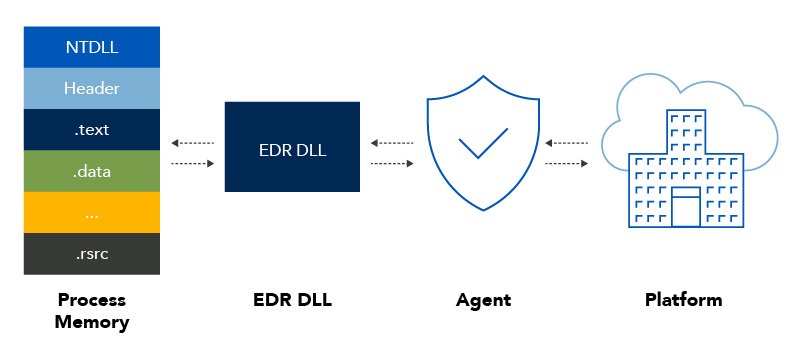

Agents often place hooks into the process by loading a DLL they own, which remaps functions already loaded. This allows the agent to monitor each and every process, gathering telemetry. The agent is constantly receiving information monitoring all changes in the process, on disk and even network communications. The agent passes the data over to the product’s cloud-based platform. This is where all data telemetry is processed into actionable data that can be classified as either malicious or benign behavior. While a majority of the preventative controls are performed by the agent, often times modifications to attack techniques can circumvent the agent’s initial detections. This is where the capabilities of the EDR’s platform really shine in identifying malicious behavior based on all the data gathered in the wild.

Figure 2: EDR Telemetry Diagram

For example, a process spawns a new process in a suspended state and modifies the memory permissions on that new process, attempting to execute a WriteProcessMemory procedure. The data written is encrypted and doesn’t trigger any malicious indicators for the agent; however, the telemetry sent to the EDR platform can still view this data and make a determination to identify these events as a potential process hollowing technique. All of this happens in less than a second, ensuring threats are not successful, which means the agent needs numerous data points to make these analytic decisions.

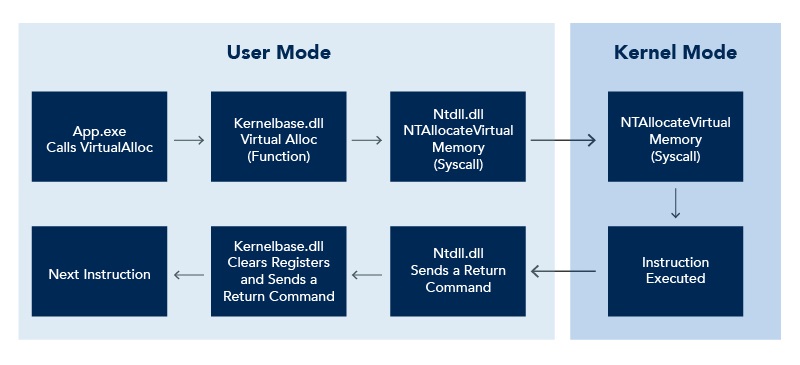

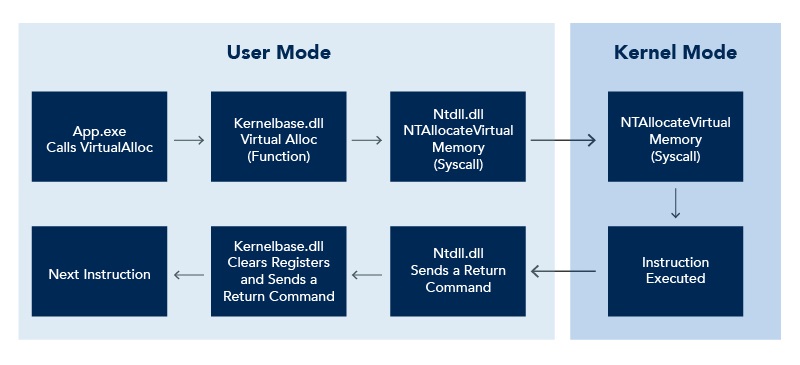

To further understand how this data flow works, we need to know a bit about Windows architecture. For starters, the Windows system offers a large set of functions and API calls that an application can utilize to execute code. The primary function of the Windows APIs is to align all the stack registers before calling the syscall to execute the low-level instruction.

Syscalls such as NTAllocateVirtualMemory provide a low-level interface to allow a process to interact with the operating system. These are low-level assembly instructions transition to the kernel and are used to tell a CPU to perform an action such as allocating memory, creating a file or writing data stored in a specific buffer to disk. These syscalls reside in the ntdll.dll dynamic linked library (DLL), and while many of them are not documented, syscalls cannot be directly called as they only perform a low-level assembly instruction.

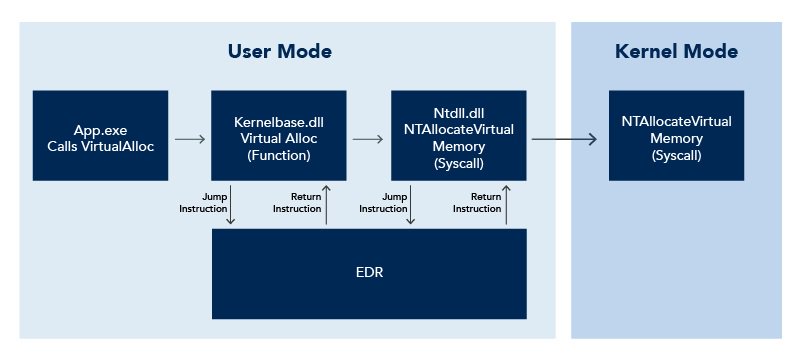

Figure 3: Execution Flow Example

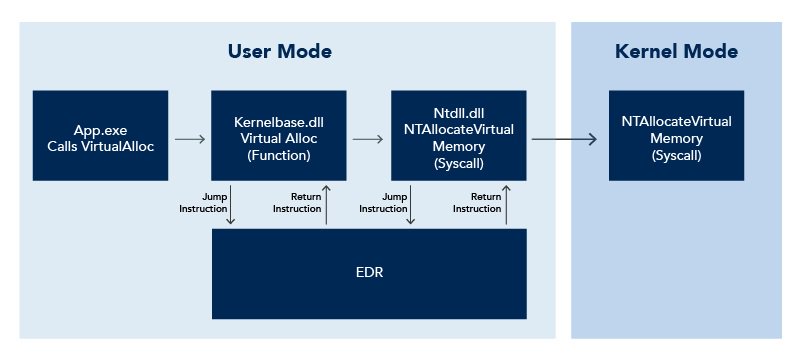

When a process is executed, system DLLs are loaded, at which point the EDR agent hooks specific API functions and syscalls such as VirtualAlloc and NTAllocateVirtualMemory. It is important to note that each EDR platform hooks different functions and syscalls, which provides different telemetry which in turn yields different information and different detections. As the execution flows, the EDR hook triggers, forcing the execution to jump from the system DLL to the EDR DLL, at which point the EDR performs a series of instructions and then returns the execution to the system DLL.

Figure 4: Execution flow Example – EDR Hooked

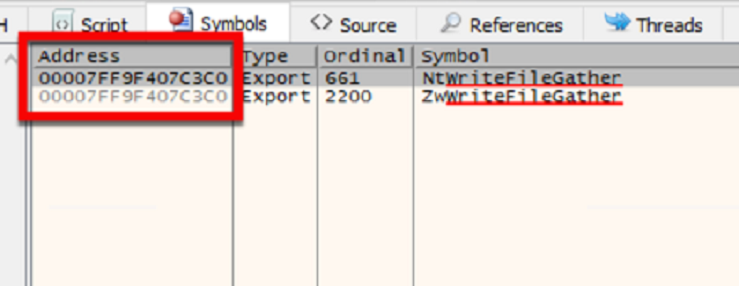

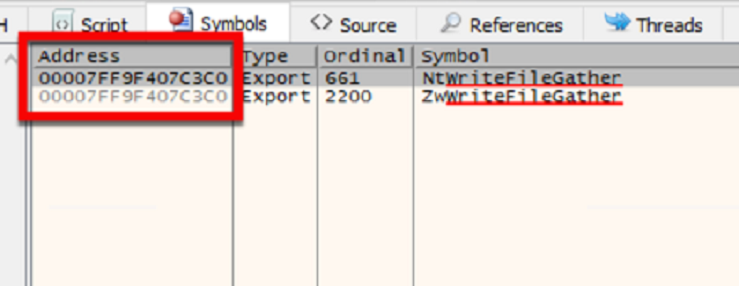

As you can see, the same syscall is called twice. This is due to the transition between user mode and kernel mode. System calls may be prefixed with the characters NT or ZW. NT syscalls represent calls from User Mode, while ZW syscalls indicate a Kernel Mode call. Regardless of the prefix, the underlying syscall instruction is identical.

Figure 5: NT and ZW Prefixes

User mode is often more appealing to attackers as it has no way of directly accessing the underlying hardware. Code that runs in user mode must use API functions that interact with the hardware on behalf of the application, allowing for more stability and fewer system-wide crashes (as application crashes will not affect the system). As a result, applications that run in user mode need minimal privileges and are more stable. Suffice to say, a lot of EDR products rely heavily on user mode hooks over kernel mode, making things interesting for attackers.

Since the hooks exist in user mode and hook into our processes, we have control over them. Since applications run within the user’s context, this means everything that's loaded into our process can be manipulated by the user in some form or another. It’s important to note that some sensitive regions of memory are set to Execute, Read (ER-), which prevents the modification of these regions. We’ll discuss some techniques to navigate around this below. "

...

Full article:

Endpoint Detection and Response: How Hackers Have Evolved | Optiv

This is an excellent article about bypassing AVs and how AVs can prevent it.

Matthew Eidelberg

" Endpoint Detection and Response: How Hackers Have Evolved

PART 1 OF A SERIES.

The primary goal of an attacker is to achieve a specific objective without being detected. This often involves establishing a foothold in an environment and then moving laterally. In a real-life compromise an attacker operates with little to no information about the target’s security controls. As a result, they must adjust their tactics to focus on remaining undetected by an unpredictable and potentially wide spectrum of security controls. Controls mature over time and new technologies have been developed, prompting attackers of all types to adapt and improve their tactics to maximize their likelihood of success.

From the perspective of an attacker, one of the more challenging of these newer technologies is endpoint detection and response (EDR), which is often touted as the future of antivirus (AV). Traditional AV is designed primarily to facilitate prevention and detection of malicious code using a combination of signatures and heuristic analysis. EDR, however, has two primary jobs: detection of malicious behaviors or other signatures and facilitation of analysis and incident response (IR). Because of this, EDR solutions have become a de facto requirement for comprehensive defense against attackers.

EDR is specifically designed to detect suspicious behaviors occurring on the endpoint. These behaviors may include a range of attack techniques, such as process execution or injection and image loads in memory. Once those behaviors have been identified, the second role of EDR comes into play, as the tools are leveraged by defenders and incident responders to take action based on the detection. This triage response may include isolating the compromised host from the network, facilitating the collection of endpoint logs, reviewing the timeline of events, collecting and documenting threat indicators or even allowing for the termination of suspect processes.

Attackers respond to the frequent deployment of these technologies by developing new, highly sophisticated techniques to evade detection on disk and in memory. These techniques extend beyond the traditional initial compromise vectors and are often utilized in post-exploitation techniques to prevent any type of detection throughout the attacker’s operation. The key to these capabilities, wherein the EDR technology makes many decisions in milliseconds, is derived from its ability to hook into all running processes on the host.

This project would not have been possible without the high-quality work from those before us. Many people have publicized ground-breaking research in reviewing EDRs and memory hooks, focusing on ways to circumvent specific products. The intent of this article is to go deeper on the subject, not focusing on techniques that only work on specific products but instead identifying systemic issues across all EDR products and how attackers can utilize them to bypass any EDR product without needing prior knowledge of a client’s security stack. This involves diving deeper on these previously discussed concepts as well as discovering new ones.

If you are interested in learning more, here are a few additional sources:

- Bypass EDR’s memory protection, introduction to hooking - Hoang Bui

- Universal Unhooking: Blinding Security Software - Jeffrey Tang

- Let’s Create An EDR… And Bypass It! - CCob

- Pushing back on userland hooks with Cobalt Strike - Raphael Mudge

What is hooking?

Hooking is a technique to alter the behavior of an application, allowing EDR tools to monitor the execution flow that occurs in a process, gather information for behavior-based analytics and detect suspicious and malicious activity. This allows for more accurate detection rates of post-initial compromise techniques (i.e. code execution) as well as post-exploitation techniques (i.e. privilege escalation, lateral movement or ransomware activity).These hooks send the data to the EDR agent running on the endpoint for the telemetry to be processed in real time. The agent is often installed at the kernel level, the highest privileged access. There are two major reasons for this; the first is to avoid being shut down or removed by an attacker, as it isn't easy to gain access to services running in the kernel. To be exploited they typically require some sort of vulnerability or the attacker must already have obtained high-integrity privileges on the endpoint. Since attackers operate with a “black box” mentality, it’s typically assumed that the initial foothold does not already have these privileges and they must be acquired through post-exploitation activity, which may be caught by an EDR if not carefully executed. Another reason these products run in the kernel is that it provides the EDR access to control and monitor the entire system.

Figure 1: Privilege Map

Agents often place hooks into the process by loading a DLL they own, which remaps functions already loaded. This allows the agent to monitor each and every process, gathering telemetry. The agent is constantly receiving information monitoring all changes in the process, on disk and even network communications. The agent passes the data over to the product’s cloud-based platform. This is where all data telemetry is processed into actionable data that can be classified as either malicious or benign behavior. While a majority of the preventative controls are performed by the agent, often times modifications to attack techniques can circumvent the agent’s initial detections. This is where the capabilities of the EDR’s platform really shine in identifying malicious behavior based on all the data gathered in the wild.

Figure 2: EDR Telemetry Diagram

For example, a process spawns a new process in a suspended state and modifies the memory permissions on that new process, attempting to execute a WriteProcessMemory procedure. The data written is encrypted and doesn’t trigger any malicious indicators for the agent; however, the telemetry sent to the EDR platform can still view this data and make a determination to identify these events as a potential process hollowing technique. All of this happens in less than a second, ensuring threats are not successful, which means the agent needs numerous data points to make these analytic decisions.

To further understand how this data flow works, we need to know a bit about Windows architecture. For starters, the Windows system offers a large set of functions and API calls that an application can utilize to execute code. The primary function of the Windows APIs is to align all the stack registers before calling the syscall to execute the low-level instruction.

Syscalls such as NTAllocateVirtualMemory provide a low-level interface to allow a process to interact with the operating system. These are low-level assembly instructions transition to the kernel and are used to tell a CPU to perform an action such as allocating memory, creating a file or writing data stored in a specific buffer to disk. These syscalls reside in the ntdll.dll dynamic linked library (DLL), and while many of them are not documented, syscalls cannot be directly called as they only perform a low-level assembly instruction.

Figure 3: Execution Flow Example

When a process is executed, system DLLs are loaded, at which point the EDR agent hooks specific API functions and syscalls such as VirtualAlloc and NTAllocateVirtualMemory. It is important to note that each EDR platform hooks different functions and syscalls, which provides different telemetry which in turn yields different information and different detections. As the execution flows, the EDR hook triggers, forcing the execution to jump from the system DLL to the EDR DLL, at which point the EDR performs a series of instructions and then returns the execution to the system DLL.

Figure 4: Execution flow Example – EDR Hooked

As you can see, the same syscall is called twice. This is due to the transition between user mode and kernel mode. System calls may be prefixed with the characters NT or ZW. NT syscalls represent calls from User Mode, while ZW syscalls indicate a Kernel Mode call. Regardless of the prefix, the underlying syscall instruction is identical.

Figure 5: NT and ZW Prefixes

So why do EDRs hook user mode?

While kernel mode is the most elevated type of access, it does come with several drawbacks that complicate EDR effectiveness. In kernel mode, visibility can be quite limited as there are several data points only available in user mode. Also, third-party kernel-based drivers are often difficult to develop and if not properly vetted can lead to higher chances of system instability. The kernel is often regarded as the most fragile part of a system and any panics or errors in kernel mode code can cause huge problems, even crashing the system entirely.User mode is often more appealing to attackers as it has no way of directly accessing the underlying hardware. Code that runs in user mode must use API functions that interact with the hardware on behalf of the application, allowing for more stability and fewer system-wide crashes (as application crashes will not affect the system). As a result, applications that run in user mode need minimal privileges and are more stable. Suffice to say, a lot of EDR products rely heavily on user mode hooks over kernel mode, making things interesting for attackers.

Since the hooks exist in user mode and hook into our processes, we have control over them. Since applications run within the user’s context, this means everything that's loaded into our process can be manipulated by the user in some form or another. It’s important to note that some sensitive regions of memory are set to Execute, Read (ER-), which prevents the modification of these regions. We’ll discuss some techniques to navigate around this below. "

...

Full article:

Endpoint Detection and Response: How Hackers Have Evolved | Optiv

This is an excellent article about bypassing AVs and how AVs can prevent it.

Last edited by a moderator: