Gandalf_The_Grey

Level 85

Thread author

Verified

Honorary Member

Top Poster

Content Creator

Well-known

Forum Veteran

Gartner has issued a stunning warning to its customers: AI browsers are a major cybersecurity risk and should be blocked for the foreseeable future.

“Agentic browsers, or what many call AI browsers, have the potential to transform how users interact with websites and automate transactions while introducing critical cybersecurity risks,” Gartner says. “CISOs [Chief Information Security Officers] must block all AI browsers in the foreseeable future to minimize risk exposure.”

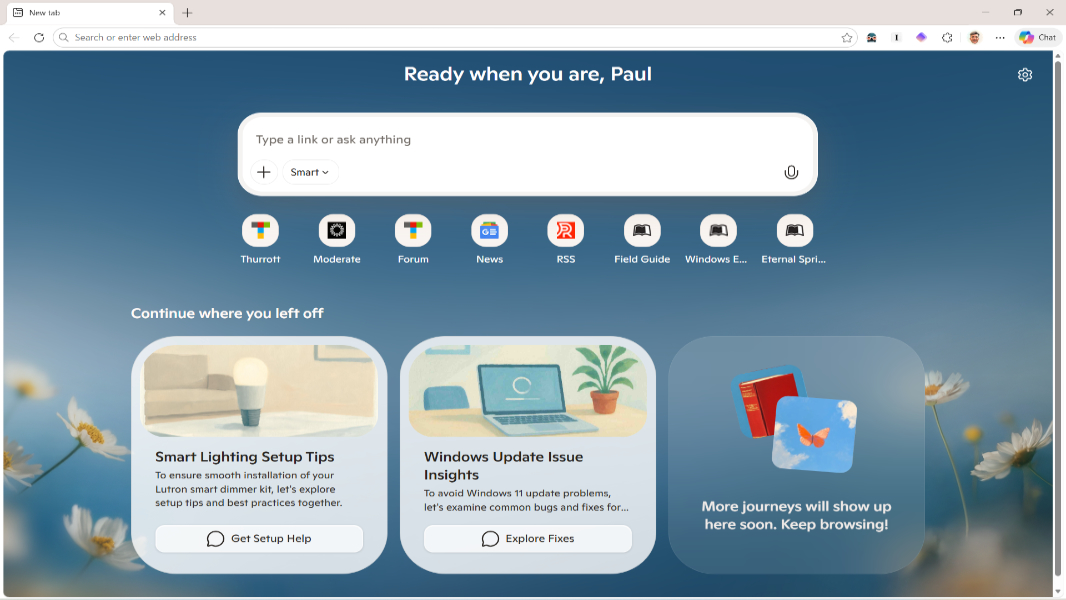

Gartner says that AI browsers are web browsers that incorporate two features. There’s an AI sidebar that allows users to summarize and otherwise interact with the content on a tab they’re viewing. And agentic capabilities that allow the browser to autonomously do things on the user’s behalf like navigate the web and complete tasks on websites, including those that require authentication.

Ostensibly, Gartner is referring to an emerging but still niche group of “AI native” browsers like Perplexity Comet, Dia, Opera Neon, OpenAI ChatGPT Atlas, and others. But by its own standards, Microsoft Edge, which ships for free in Windows, is now an AI web browser. And Google is racing to update Google Chrome, the most popular web browser by far, with agentic capabilities.

The problems with AI browsers are many, but Gartner says the biggest threats are those that few understand now. In addition to obvious issues like users sharing private corporate data with a cloud-hosted AI, these web browsers seem to be particularly sensitive to prompt injection attacks that can leak all kinds of data, including user credentials that open businesses and individuals to further danger.

Perhaps ironically, the new natural language capabilities that make AI so powerful and easy to use are tied to its biggest potential vulnerabilities because existing security controls aren’t designed to protect users engaged in these interactions. Gartner says that it will take “years, not months” to even understand the potential risks and that the ability to fully eliminate all risks is “unlikely” regardless of the time frame.

To be clear, Gartner’s advice is for business customers that manage fleets of users. But the warning should be taken to heart by individuals as well, since many users will need to share website credentials that have access to their personal information for these AI browsers to work correctly. And these AIs could be fooled into navigating to phishing and other malicious sites, opening users up to massive data loss without their direct involvement.

Gartner: All AI Browsers Should be Blocked for Foreseeable Future

Gartner has issued a stunning warning to its customers: AI browsers are a major cybersecurity risk and should be blocked.