Dear Community

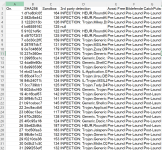

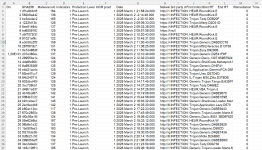

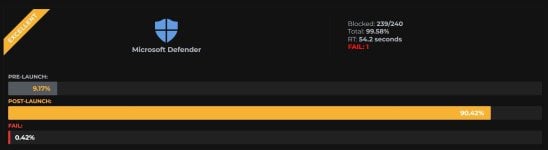

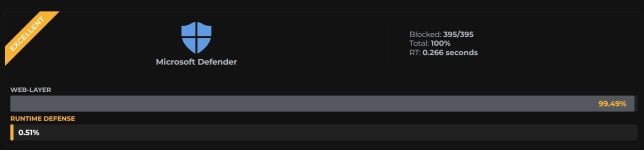

We have published the results for March 2026. The article has been updated with additional technical details. In each series, we will show how different solutions perform in a console or other central incident management platform.

In this edition, we’re also including Surfshark One with antivirus as an all-in-one solution, as well as CatchPulse Pro and Microsoft Business + EDR.

The evaluation includes a range of factors:

We have published the results for March 2026. The article has been updated with additional technical details. In each series, we will show how different solutions perform in a console or other central incident management platform.

In this edition, we’re also including Surfshark One with antivirus as an all-in-one solution, as well as CatchPulse Pro and Microsoft Business + EDR.

- Publication: Advanced In-The-Wild Malware Test in March 2026 including effectiveness analysis and telemetry » AVLab Cybersecurity Foundation

- Results: Recent Results » AVLab Cybersecurity Foundation

The evaluation includes a range of factors:

- such as telemetry,

- process correlation,

- host linking in lateral movement,

- command-line visibility,

- AI assistant evaluation,

- attack chain visualization,

- and many others that are useful for the Security Operations Center.