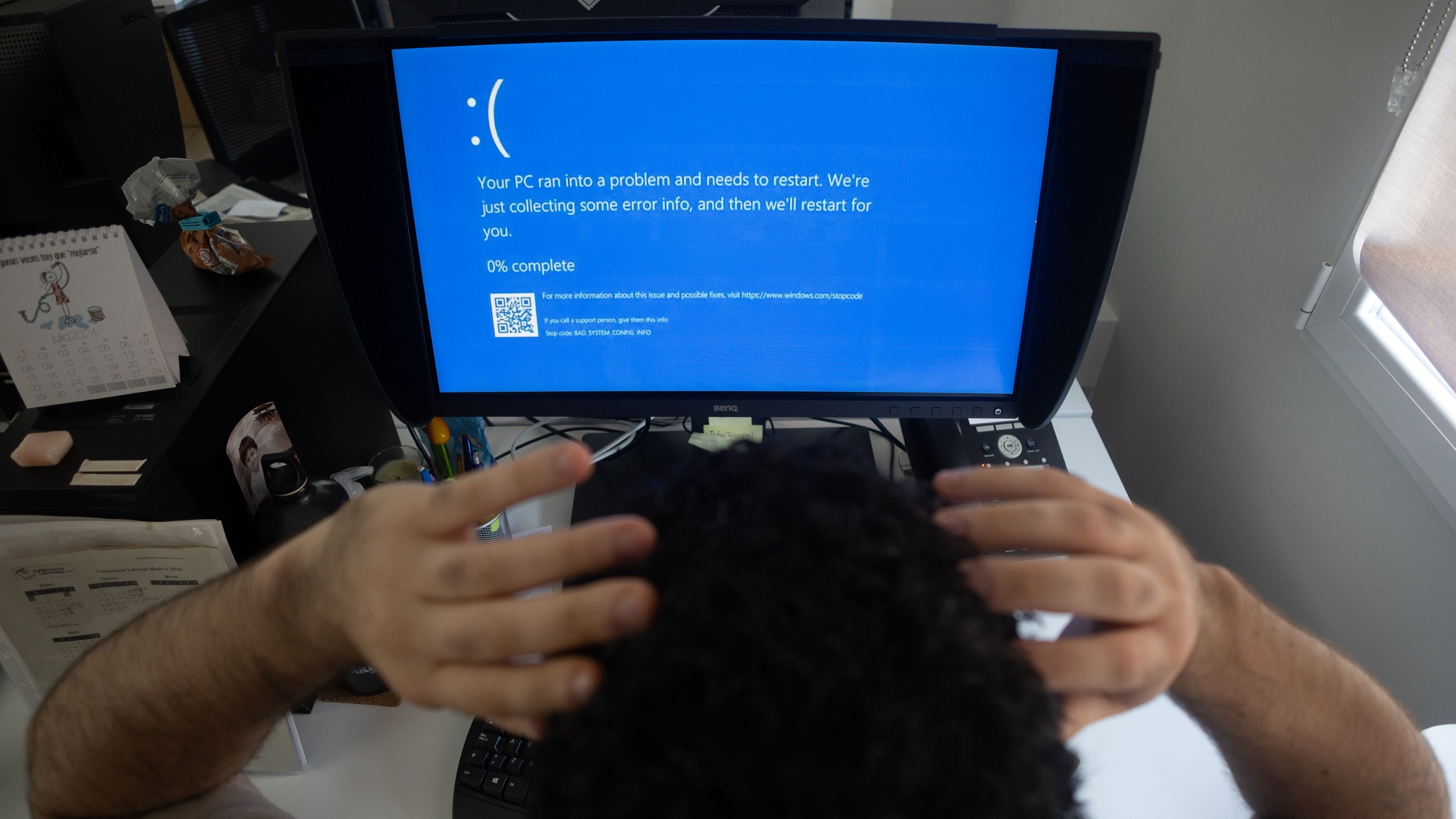

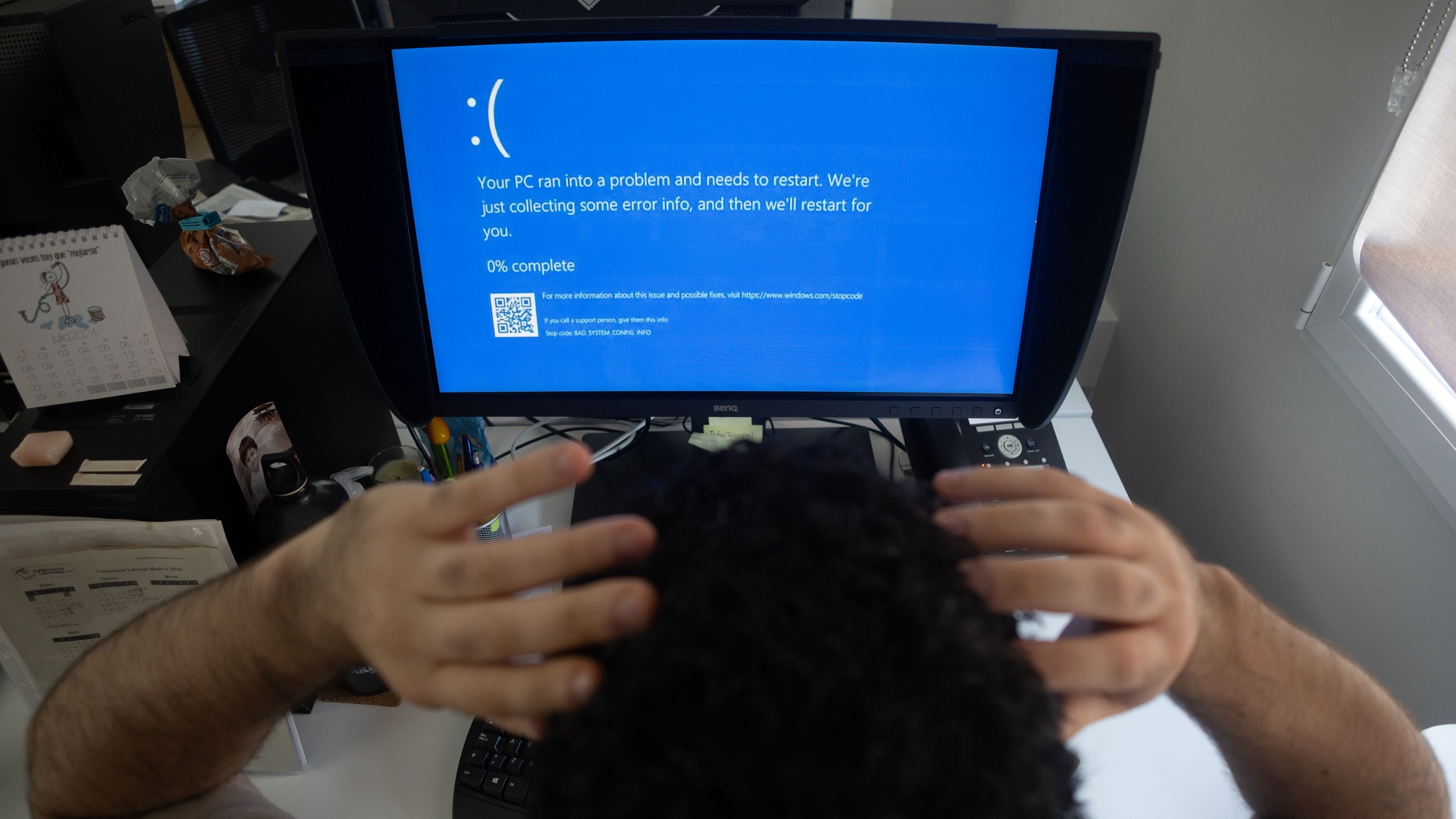

A new report reveals that AI-developed malware—created using just three months of reinforcement learning and a budget of around $1,600—can successfully circumvent Microsoft Defender about 8% of the time. Let that sink in.

Read more:

www.windowscentral.com

www.windowscentral.com

- The malware was trained using Qwen 2.5, an advanced language model, within a sandbox environment running Defender for Endpoint. Through iterative testing, it learned to evade detection reliably.

- In contrast, other AI models (Anthropic’s Claude, DeepSeek’s R1) showed less than 1% success—making Qwen 2.5 remarkably effective.

- This experiment highlights a growing risk: as AI developers enter the cybercrime realm, malware will rapidly become smarter, adapting to defenses without human input.

Debate Sparks:

- Security vs. AI Arms Race: If AI can outsmart our best antivirus in weeks, do traditional AV models even stand a chance?

- Home User Risk: Most home PCs rely on Defender—are we already vulnerable without realizing it?

- Raising the Bar: Should Microsoft and other AV vendors integrate AI to counter AI threats—or are we chasing a losing battle?

- Transparency: How much do we need to know about AI-based attacks before deciding to "stay offline" or apply extra tools?

Read more:

AI-powered malware eludes Microsoft Defender's security checks 8% of the time — with just 3 months of training and "reinforcement learning" for around $1,600

AI-assisted malware can apparently bypass Microsoft Defender for Endpoint without relying on the internet for its training data.

www.windowscentral.com

www.windowscentral.com