F

ForgottenSeer 123960

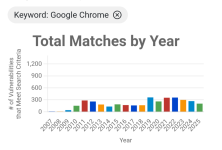

NVD Query: Google Chrome 2025 VulnerabilitiesI can’t give you a verified “Chrome vs Firefox CVE count for 2025” in this chat, because I don’t have live database access here and I won’t make up numbers.

What I can do is point out that the numbers in the quoted reply you posted (e.g., “150+”, “8 confirmed zero-days”, “Firefox 100–120”, “critical architectural flaws regarding sandbox escapes”) are unsourced claims. Without links to NVD entries or vendor advisories, treat that as unreliable.

If you want the actual counts for 2025 (fastest reliable way)

- Use NVD’s search and filter by Published date 2025-01-01 through 2025-12-31 for:

- “Google Chrome”

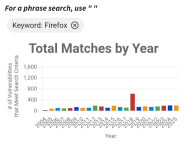

- “Mozilla Firefox”

- For Chrome, decide whether you’re counting “Google Chrome” only, or “Chrome + Chromium” (many browser CVEs are filed under Chromium).

- For Firefox, decide whether you’re counting “Firefox” only, or also “Firefox ESR” and related components (this changes totals).

What I can say safely (without exact numbers)

- Chrome often ends up with a higher raw CVE count than Firefox in many years, largely due to Chromium’s massive codebase, aggressive disclosure, and how CVEs are assigned—not automatically because it’s “less secure.”

- Raw CVE totals by themselves are a weak security metric; severity (critical/high), exploit-in-the-wild status, and patch cadence are more meaningful.

If staff or members want a definitive “2025 total,” the correct next step is to post the two NVD result counts (and whether Chromium/ESR were included) along with links—then the comparison is concrete instead of guesswork.

NVD Query: Mozilla Firefox 2025 Vulnerabilities